AnythingLLM

Opens anythingllm.com

Opens anythingllm.com

Overview

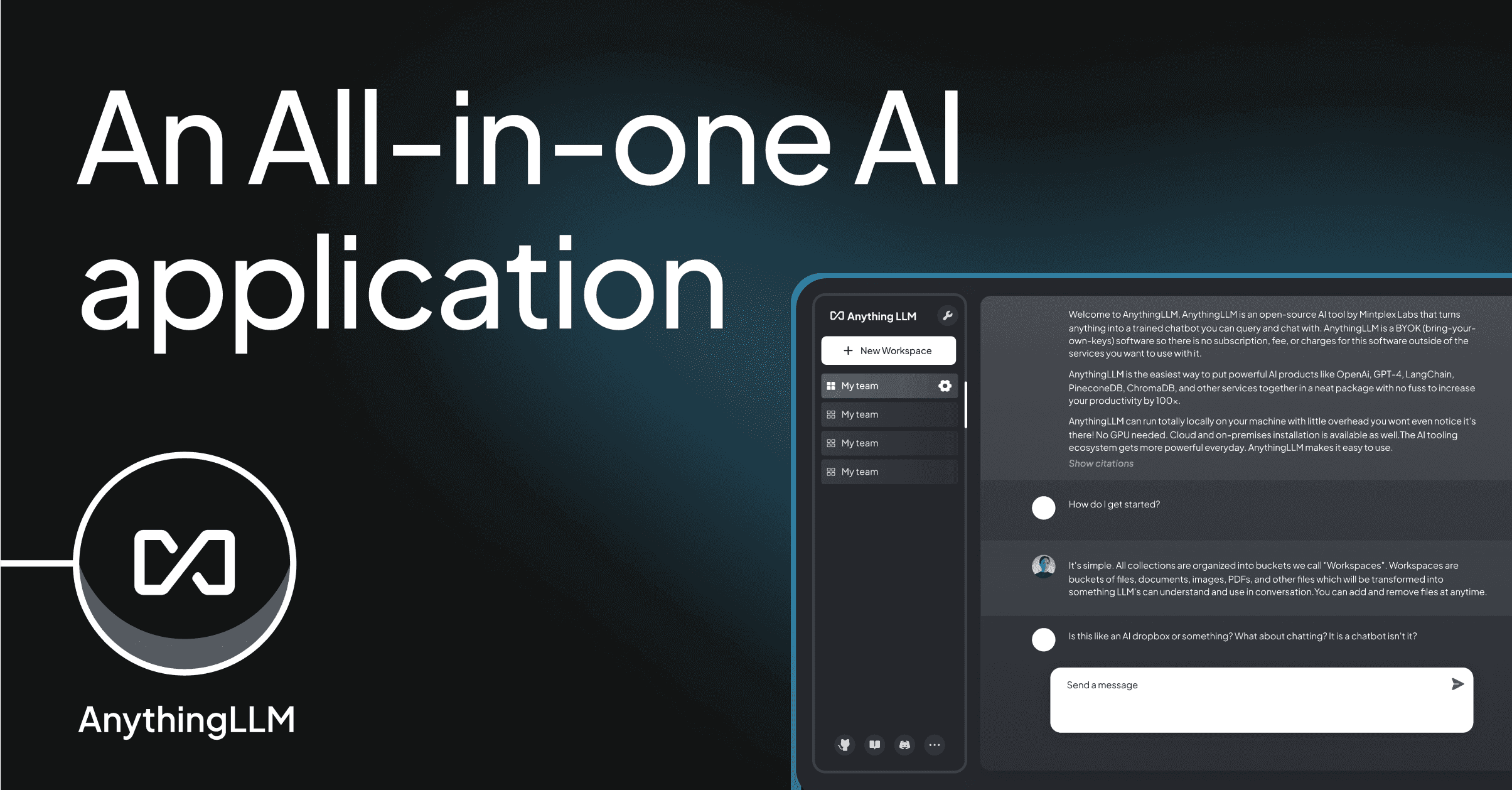

AnythingLLM packages desktop RAG: ingest documents, embed, and chat with your choice of model provider - a pragmatic middle ground between notebooks and a full product build.

It’s useful for teams who want private experimentation without standing up a bespoke stack.

Also good for

Jump to more tools tagged by use case.

Works with

Integrations and model surfaces called out for this listing.

- Claude

- Gemini

Features

- Desktop and self-host options

- Document ingestion pipelines

- Multi-model provider support

- Workspace isolation

Pros & cons

Pros

- Quick private RAG demos

- Flexible provider choice

- Active OSS community

Cons

- Not a turnkey enterprise suite

- Performance tuning required

- UI is utilitarian

Alternatives

Similar tools from the directory - same category first.

Reviews

Avg 4.7 / 5 from 3 reviews

- Priya K.Verified

AnythingLLM saved us hours each week. Onboarding was straightforward and the defaults matched how we already work.

- Jordan L.Verified

Solid experience with AnythingLLM. A few rough edges on edge cases, but support and updates have been steady.

- Sam R.Verified

We evaluated several options and kept AnythingLLM for the quality bar and integrations. Would recommend trying the paid tier if you’re serious.